- Claude Code →

~/.claude/skills/jank-cloud/SKILL.md - Cursor / VS Code → project root or your AI plugin's skills directory

- ChatGPT / Custom GPTs → paste the contents into your system prompt or knowledge base

- Anything else → upload the file as context or paste it inline

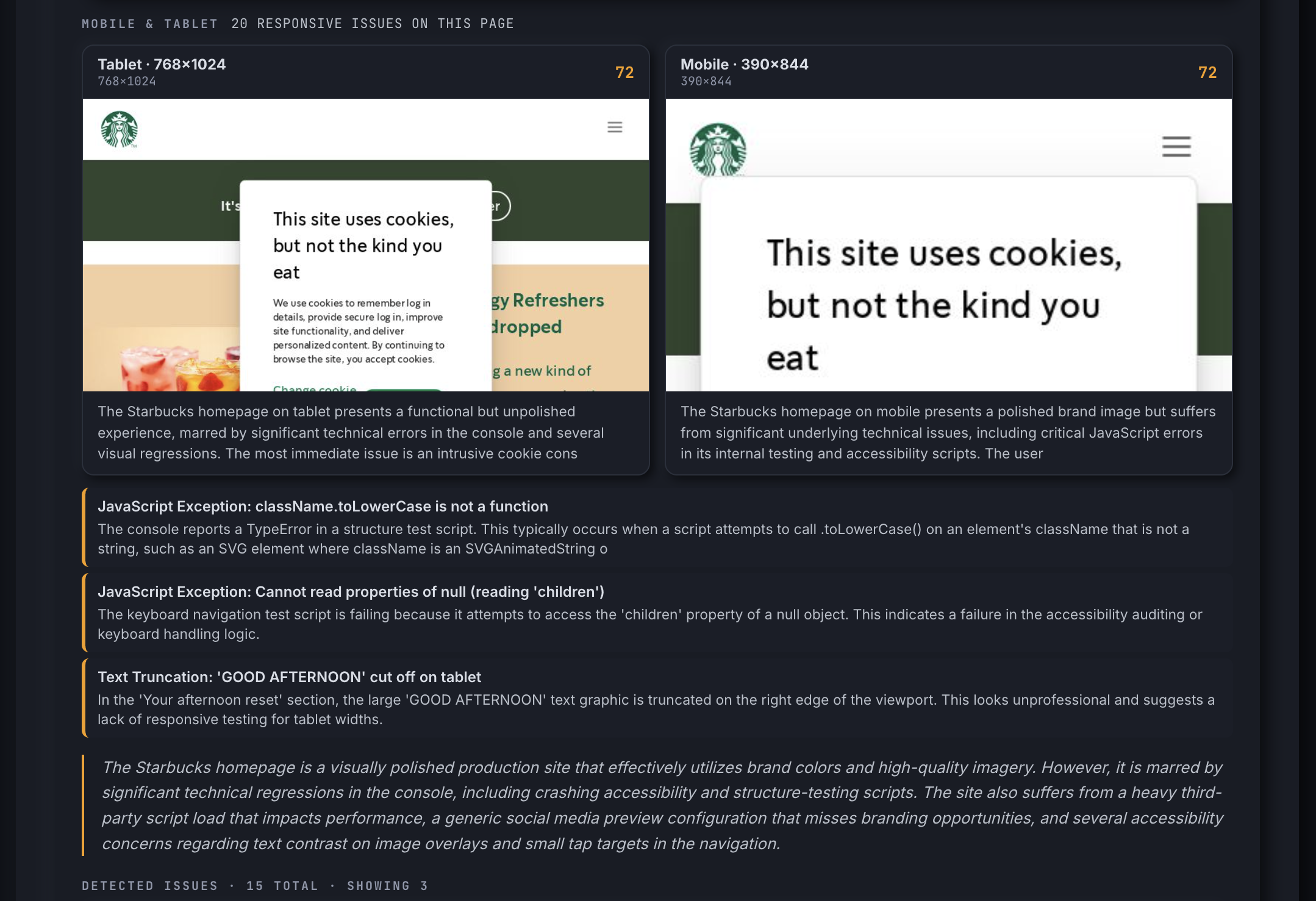

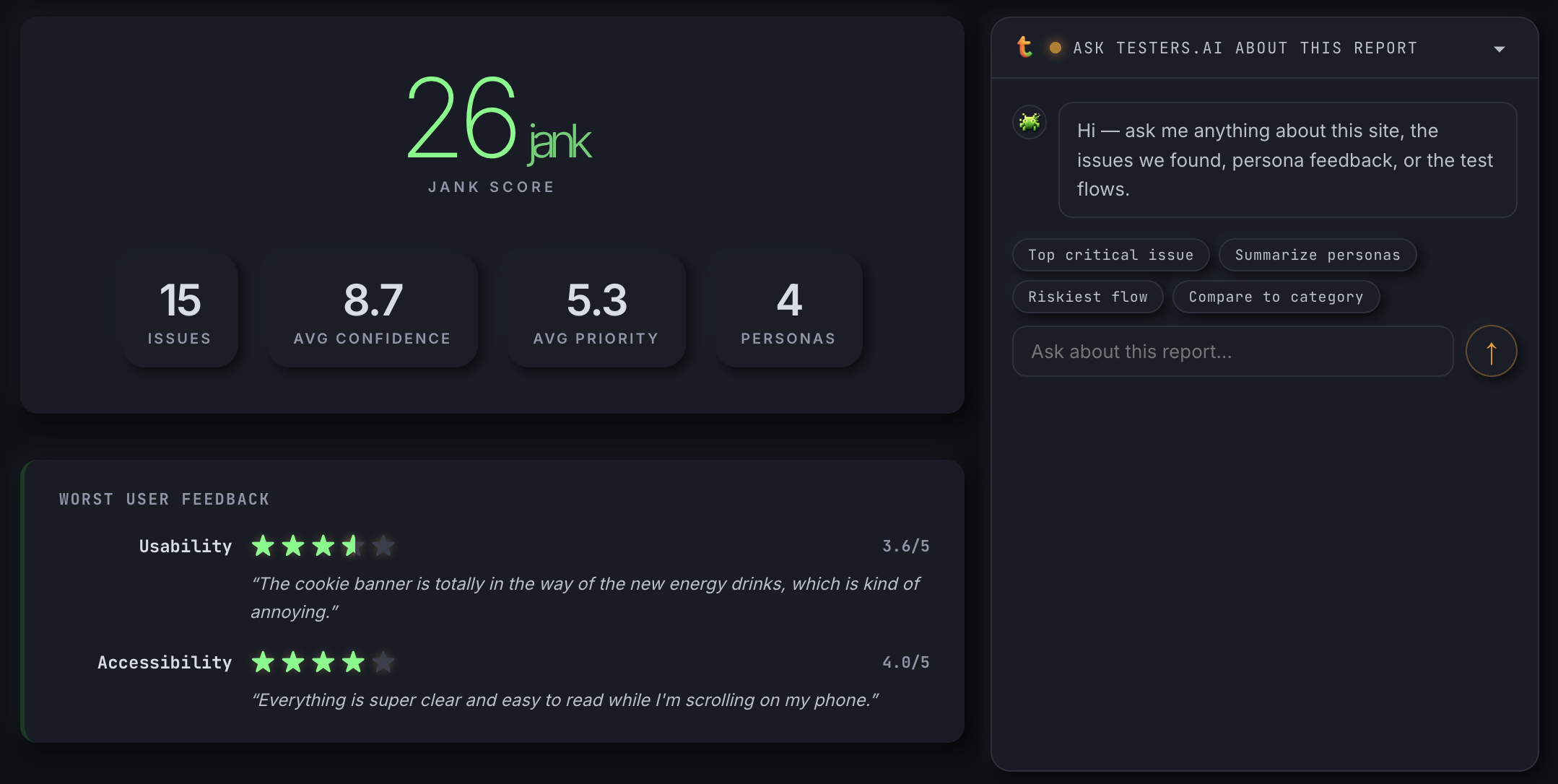

jank https://bing.com

The first LLM fine-tuned for QA & testing. OpenAI-compatible — drop it into any client by swapping the base URL. Free to start.

- model.testers.ai

- tai-1 & tai-1-tuned

- Streaming SSE

- 6 modes

No credit card · email verification sent instantly

✓ Check your email — we sent a verification link. Click it to activate your key.

https://model.testers.ai

curl https://model.testers.ai/v1/chat/completions \

-H "Authorization: Bearer <your-key>" \

-d '{"model":"tai-1","messages":[{"role":"user","content":"Find bugs on https://bing.com"}]}'

api.testers.ai/v1Claude · ChatGPT · Cursor · run cloud audits from any chatbotConnection — tunnels & proxies

Advanced — auth & headers

An AI Model Built for Software Quality and Confidence

model cost

Already have a key? Enter it

tai_live_••••

curl https://model.testers.ai/v1/chat/completions \

-H "Authorization: Bearer tai_live_demo" \

-H "Content-Type: application/json" \

-d '{

"model": "tai-1",

"messages": [

{

"role": "system",

"content": "Prioritise WCAG 2.2 AA issues. Ignore marketing copy."

},

{

"role": "user",

"content": "<html>...</html>"

}

],

"tai": {"mode": "balanced", "routing": "auto", "max_cost_usd": 0.05}

}'

# The system message is optional — omit it for default behaviour.

# It is merged with tai.steering and forwarded to every model leaf.const res = await fetch('https://model.testers.ai/v1/chat/completions', {

method: 'POST',

headers: {

'Authorization': 'Bearer tai_live_demo',

'Content-Type': 'application/json'

},

body: JSON.stringify({

model: 'tai-1',

messages: [

{ role: 'system', content: 'Prioritise WCAG 2.2 AA issues. Ignore marketing copy.' },

{ role: 'user', content: html }

],

tai: { mode: 'balanced', routing: 'auto', max_cost_usd: 0.05 }

})

});

const data = await res.json();import requests

resp = requests.post(

'https://model.testers.ai/v1/chat/completions',

headers={'Authorization': 'Bearer tai_live_demo'},

json={

'model': 'tai-1',

'messages': [

{'role': 'system', 'content': 'Prioritise WCAG 2.2 AA issues. Ignore marketing copy.'},

{'role': 'user', 'content': html},

],

'tai': {'mode': 'balanced', 'routing': 'auto', 'max_cost_usd': 0.05}

}

)

print(resp.json()['choices'][0]['message']['content'])Test as you build — inside Claude, Codex & more#

The AI testing plugin runs directly inside your coding environment. As your AI writes code, it audits in real time — finding bugs, regressions, and quality gaps before they ever reach review.

Can I bring my own LLM?

Yes. Pick from Anthropic Claude, OpenAI (GPT-4o / GPT-5 etc.), Google Gemini, or Azure OpenAI. For fully air-gapped or zero-egress setups, point the platform at a self-hosted endpoint (Ollama, vLLM, LocalAI, or any OpenAI-compatible API). Provider + model are passed per-request via the provider / model fields, or set globally per deployment.

Can I self-host on my own private network?

Yes — three ways:

- Docker / Docker Compose — one-line bring-up via

cloud/enterprise/docker-compose.yml. - Kubernetes — manifests in

cloud/enterprise/kubernetes/; tested on EKS, GKE, AKS, and bare-metal k3s. - Single VM — clone, set

ADMIN_TOKEN+ LLM key,docker compose up -d --build. Up in under 10 minutes.

All three ship as the same Node + Playwright + cloudflared image, with Firestore (or any Firestore-API-compatible backend) for metadata and a configurable object store for artifacts.

Can I run fully air-gapped?

Yes. Pair a self-hosted deployment with a self-hosted LLM endpoint (Ollama / vLLM / LocalAI) and the entire system runs without outbound internet — neither testers.ai nor any LLM vendor sees your traffic or your reports. The hosted UI, the runner, the LLM call, and the artifact store all live on your network.

How do I tunnel into private / VPN-protected targets?

The runner can bring up a tunnel for the duration of a single test, then tear it down. Supported tunnel types:

- Tailscale — join the runner to your tailnet; address the target by its tailnet hostname.

- cloudflared — runs the Cloudflare connector inside the runner container.

- ngrok — for ad-hoc reverse tunnels.

- SSH reverse — opens an SSH reverse forward to your jump host.

- WireGuard, OpenVPN, IPSec — supported on self-hosted deployments.

- GCP VPC connector — for managed Cloud Run deployments inside your GCP project.

- Reverse proxy — pass-through if your target is already exposed via a corporate reverse-proxy host.

Can I import / export tests + findings?

Yes. Every stored report renders to multiple formats on demand:

- JSON — full report (issues, severity, evidence, persona reviews, flow steps, screenshots, timing). Stable schema, version-tagged.

GET /r/:id.json - Markdown — a human-readable report with embedded screenshots and one fix-prompt per issue.

GET /r/:id.md - TXT — a flat list of every issue's prompt-to-fix-this-issue, ready to pipe into your AI coding agent (Claude, Cursor, Copilot, Antigravity).

- HTML — the shareable web report (

/r/:id), with the report itself shareable as a permanent URL.

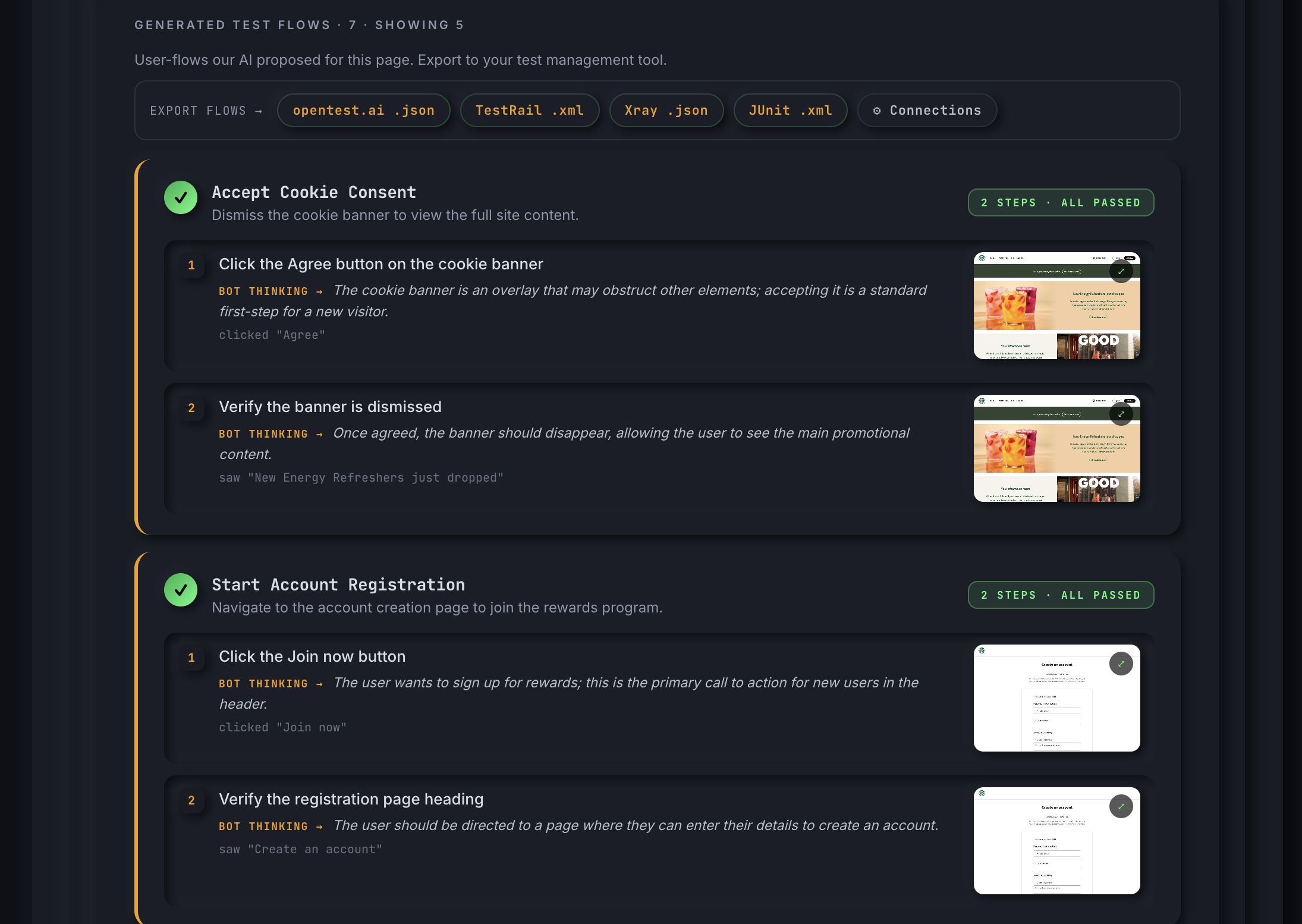

Test cases can also be exported to CSV, Jira, TestRail, or Xray directly from the chat UI.

Can reports be shared?

Yes. Every run gets a permanent shareable URL (https://reports.testers.ai/r/<id> on hosted, or your equivalent base URL on self-host). You choose visibility: "public" (anyone with the link views the report) or visibility: "private" (admin-token gated). Optional emails list sends a "report ready" email when a run completes.

How long does a run take?

A full multi-dimensional run (bug finding + exploratory + functional + competitive + personas + accessibility + crawl) typically lands in ~12–15 minutes. Smaller scoped runs (single-page bugs only, no personas, no flows) finish in 3–5 minutes. Every agent runs in parallel — adding more dimensions doesn't multiply the runtime, it just lights up more lanes.

What can I configure per run?

- URLs — 1 to 25 per submission, batch-mode supported.

- Subpages — let the AI pick N additional pages from the entry URL (or disable).

- Flows — generate N test flows; pass

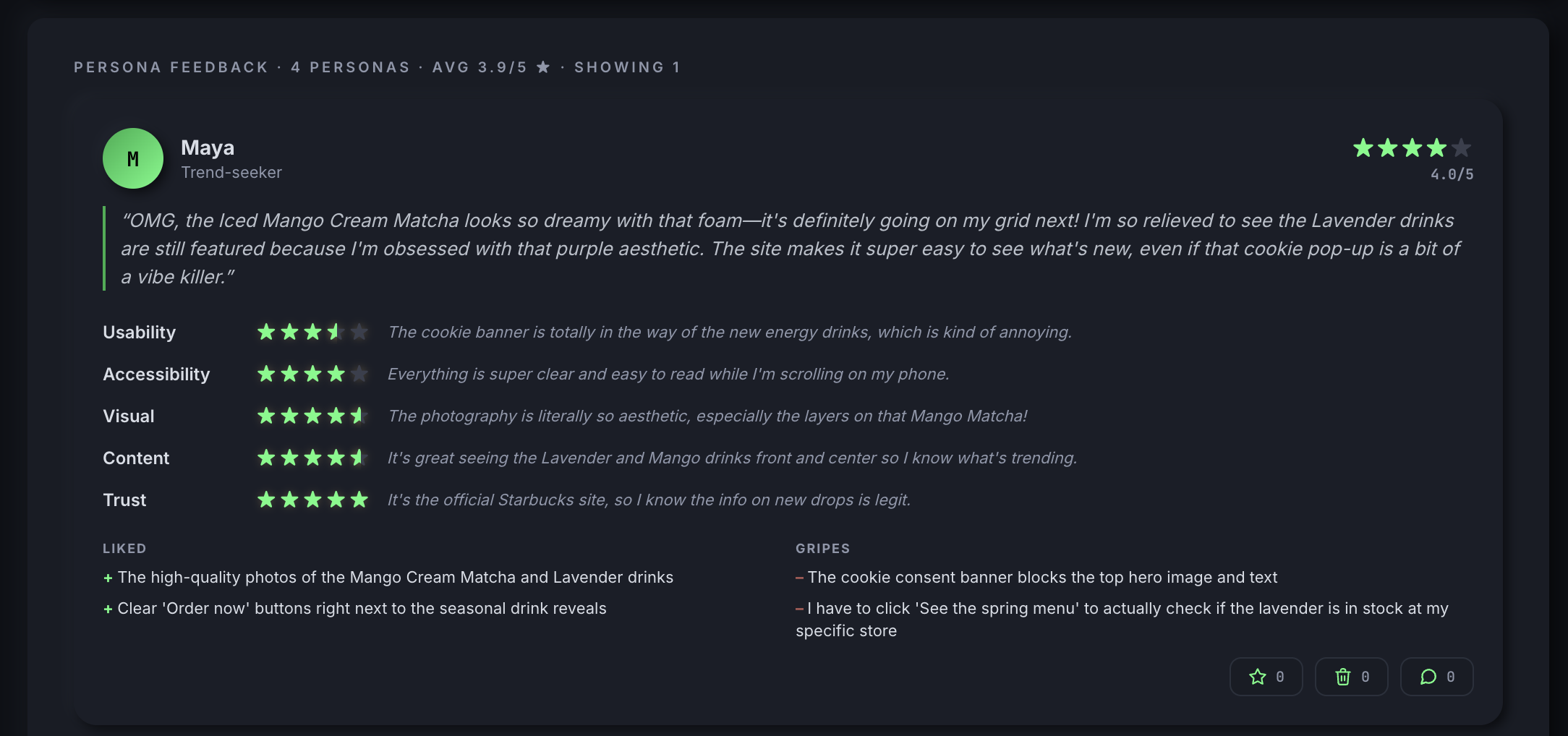

customPromptto steer the agent (e.g., "focus on the checkout funnel"). - Personas — generate N persona reviews with optional

customPromptto bias toward your audience. - Provider + model — pick LLM per-run.

- Visibility — public / private / admin-token gated.

- Tunnel spec — Tailscale, cloudflared, ngrok, SSH, WireGuard, OpenVPN, IPSec, GCP VPC.

- Email notifications — comma-separated list of recipients per run.

- Custom checks — per-brand / per-customer test rules layered on top of the standard suite.

- Label — free-form tag for grouping in the admin dashboard.

Does it have a REST API + CLI?

Yes. POST /api/reports with a JSON list of URLs and the runner returns report IDs immediately; poll GET /api/reports/:id for status, fetch /r/:id.json for the result. Auth is via an X-Api-Key header. There's also a scripts/submit.sh curl wrapper bundled with the cloud package, and a CLI runner for CI pipelines (GitHub Actions, GitLab CI, Jenkins, CircleCI).

What about admin / ops?

An admin dashboard at /admin shows every report, its queue/running/done state in real time, with one-click retry on failures. Per-key quotas, per-account demo limits, and a separate ops API (see docs/api-internal.md) cover the operator side. Artifacts are versioned in object storage; metadata and run state live in Firestore (or a Firestore-compatible store on self-host).

Want AI Testing Experts to Run It For You? #

IcebergQA is a different category of QA service — built around AI from the start, not retrofitted onto a manual practice. Senior QA engineers run the AI agents, curate the findings, and deliver a clear roadmap. You get the speed of automation with the judgment of experience — so your team ships with confidence, no matter how fast.

Led by Jason Arbon (testers.ai, ex-Google/Microsoft) and Phil Lew (XBOSoft, 20+ years enterprise QA) — the team behind testers.ai.

Led by Jason Arbon (testers.ai, ex-Google/Microsoft) and Phil Lew (XBOSoft, 20+ years enterprise QA) — the team behind testers.ai.

"The next era of engineering will be defined by who can justify confidence in what they generate."

AI now generates more code than any team can fully read or understand. Software ships continuously — faster than the verification models built around it.

94% code coverage and 10,000 passing tests are not confidence. They are the illusion of diligence. Vanity metrics that give teams permission to ship without evidence.

Perfect software isn't achievable. But confidence is. Confidence is knowing — with real evidence — what works, what breaks, and where risk lives right now.

Ship with real evidence, not optimism. The teams that win in the GenAI era will be the ones who can prove what they're shipping is ready.

Get in Touch

Get in Touch

Questions about testing, pricing, or want us to test your app? We read every message.

🔌 Request Plugin Early Access

Tell us which plugin you're interested in and how you're using AI in your workflow. We'll send setup instructions as soon as your slot is ready.

Contact IcebergQA

Contact IcebergQA

Senior QA engineers + AI tooling — we'll reach out within one business day.

Request Testers.ai Unlock Code

Signup and we'll send you an unlock code that removes free-trial rate limits on this chat and the AI-based testing tools.

Have our experts run AI testing for your app

Drop a few details and one of our test engineers will reach out to scope a run against your app — bugs, accessibility, persona feedback, and a comprehensive quality report.

Chat Context

Add specifications, test plans, API docs, requirements — anything the assistant should treat as ground truth. Each entry is sent with every query. Add as many as you need.

Get the report for your app

Zero effort. AI finds your most important escaped issues, persona feedback, and regression-testing gaps — and shows how you compare to category peers.

- Full report unlocked

- Bugs across 7 categories

- Persona feedback findings

- Category benchmarks

- Competitive intelligence

- Everything in Standard

- Human expert review

- Custom testing & reporting

- Pre-production & on-premise

coTestPilot

What's your role?

Which platform?

Recommended for :

Settings

Your profile is stored only in this browser.

We tailor responses and recommended tools to your role. VP/Exec gets quality-analytics emphasis; engineers get technical depth.

Controls UI labels and the language the assistant replies in.

Free-trial proxy. Optional: attach your email / unlock code for higher limits.

Don't have an unlock code? Request an unlock code →

Calls OpenAI directly from your browser. Key is stored in this browser only.

Calls Anthropic directly from your browser. Key is stored in this browser only.

Calls Gemini directly from your browser. Key is stored in this browser only.

Connect Jira, TestRail, or Xray to auto-file bugs & tests, and to pull existing issues/tests into chat context. Beta — requires your org to allow CORS from this page; if filing fails, use the CSV exports.

TestRail admin → My Settings → API Keys.

Xray lives inside Jira. Uses your Jira credentials above. Fill in project + test plan to auto-link filed tests.

Submit one or more URLs for analysis. Returns immediately with report ID(s); analysis runs asynchronously.

curl -X POST https://reports.testers.ai/api/reports \

-H "X-Api-Key: jk_YOUR_KEY" \

-H "X-Account: you@example.com" \

-H "Content-Type: application/json" \

-d '{

"urls": ["https://example.com"],

"visibility": "public",

"intensity": "standard",

"subpages": { "enabled": true, "count": 2 },

"personas": { "enabled": true, "count": 4 },

"flows": { "enabled": true, "count": 5 }

}'

import requests

resp = requests.post(

"https://reports.testers.ai/api/reports",

headers={

"X-Api-Key": "jk_YOUR_KEY",

"X-Account": "you@example.com",

"Content-Type":"application/json",

},

json={

"urls": ["https://example.com"],

"visibility": "public",

"intensity": "standard",

"subpages": {"enabled": True, "count": 2},

"personas": {"enabled": True, "count": 4},

"flows": {"enabled": True, "count": 5},

},

timeout=30,

)

resp.raise_for_status()

report_id = resp.json()["created"][0]["id"]

print("Queued:", report_id)

// Node.js 18+ or any modern browser

const resp = await fetch("https://reports.testers.ai/api/reports", {

method: "POST",

headers: {

"X-Api-Key": "jk_YOUR_KEY",

"X-Account": "you@example.com",

"Content-Type": "application/json",

},

body: JSON.stringify({

urls: ["https://example.com"],

visibility: "public",

intensity: "standard",

subpages: { enabled: true, count: 2 },

personas: { enabled: true, count: 4 },

flows: { enabled: true, count: 5 },

}),

});

if (!resp.ok) throw new Error(`HTTP ${resp.status}`);

const { created } = await resp.json();

console.log("Queued:", created[0].id);

{

"created": [{

"id": "387ee94b-2834-49bc-b832-223e66e32d34",

"url": "https://example.com/",

"viewUrl": "/r/387ee94b-2834-49bc-b832-223e66e32d34"

}]

}

| Field | Type | Notes |

|---|---|---|

| urls | string[] | 1–25 URLs. Scheme auto-prefixed. |

| visibility | "public"|"private" | Default: public |

| intensity | "standard"|"deep" | deep = 3× credits, VPAT |

| subpages | object|false | {enabled, count} — AI crawls N extra pages |

| personas | object|false | {enabled, count} — N persona reviews |

| flows | object|false | {enabled, count} — N generated test flows |

| label | string | Optional tag shown in dashboard |

Poll every 5s while status is queued or running. Add ?slim=1 for a lightweight polling response (<5KB). Drop it when done for the full report.

# Poll while running

curl "https://reports.testers.ai/api/reports/REPORT_ID?slim=1" \

-H "X-Api-Key: jk_YOUR_KEY" \

-H "X-Account: you@example.com"

# Full report once done

curl "https://reports.testers.ai/api/reports/REPORT_ID" \

-H "X-Api-Key: jk_YOUR_KEY" \

-H "X-Account: you@example.com"

import time, requests

REPORT_ID = "387ee94b-…"

H = {"X-Api-Key": "jk_YOUR_KEY", "X-Account": "you@example.com"}

URL = f"https://reports.testers.ai/api/reports/{REPORT_ID}"

# Poll lightweight endpoint every 5s until done

while True:

r = requests.get(URL + "?slim=1", headers=H, timeout=15).json()

if r["status"] in ("done", "failed", "blocked"):

break

print(f"status={r['status']} pct={r.get('progress',{}).get('percent',0)}")

time.sleep(5)

# Fetch the full report once status is done

report = requests.get(URL, headers=H, timeout=30).json()

print("Score:", report["analysis"]["score"])

for issue in report["analysis"]["issues"][:5]:

print("-", issue["bug_title"])

const REPORT_ID = "387ee94b-…";

const headers = { "X-Api-Key": "jk_YOUR_KEY", "X-Account": "you@example.com" };

const URL = `https://reports.testers.ai/api/reports/${REPORT_ID}`;

// Poll lightweight endpoint every 5s

const sleep = (ms) => new Promise((r) => setTimeout(r, ms));

while (true) {

const r = await (await fetch(`${URL}?slim=1`, { headers })).json();

if (["done", "failed", "blocked"].includes(r.status)) break;

console.log(`status=${r.status} pct=${r.progress?.percent ?? 0}`);

await sleep(5000);

}

// Fetch the full report once done

const report = await (await fetch(URL, { headers })).json();

console.log("Score:", report.analysis.score);

for (const issue of report.analysis.issues.slice(0, 5)) {

console.log("-", issue.bug_title);

}

{

"status": "done",

"analysis": {

"score": 24,

"issues": [{

"bug_title": "Submit button has insufficient contrast",

"bug_type": ["accessibility"],

"bug_priority": 2,

"bug_severity": "moderate",

"prompt_to_fix_this_issue": "Paste into Claude Code / Cursor: …"

}]

},

"personaFeedback": { "personas": [ … ] },

"testFlows": { "flows": [ … ] },

"baselines": { "rank": { "betterThanPct": 68 } },

"artifacts": { "entryScreenshotUrl": "https://…" }

}

https://reports.testers.ai/r/REPORT_ID.json # full JSON

https://reports.testers.ai/r/REPORT_ID.md # Markdown (paste into docs/issues)

List all reports for your account, newest first.

curl "https://reports.testers.ai/api/reports?limit=20" \

-H "X-Api-Key: jk_YOUR_KEY" \

-H "X-Account: you@example.com"

| Param | Notes |

|---|---|

| limit | Max results (default 20, max 100) |

| before | Cursor — timestamp for pagination |

| status | Filter by: queued | running | done | failed |

Ask questions about a specific report. The server attaches a compact summary of issues, personas, flows, and baselines as context.

curl -X POST \

"https://reports.testers.ai/api/reports/REPORT_ID/chat" \

-H "X-Api-Key: jk_YOUR_KEY" \

-H "X-Account: you@example.com" \

-H "Content-Type: application/json" \

-d '{

"messages": [

{"role": "user", "content": "What is the most critical issue?"}

]

}'

{ "reply": "The highest-priority issue is …" }

| Mode | Headers | Quota |

|---|---|---|

| Demo | X-Account: you@email.com | 1 report/day · 1 URL |

| API key | X-Api-Key: jk_… + X-Account: … | 25/day · 25 URLs/req |

Sign in → click your avatar → Generate API key. Keys have the format jk_…

# Every request needs both headers

X-Api-Key: jk_YOUR_KEY

X-Account: you@example.com

25 reports/day per key · up to 25 URLs per request · reports are kept indefinitely · screenshots retained 90 days.

OpenAI-compatible endpoint — drop-in replacement. Quality-tuned for software testing tasks: bug finding, test generation, accessibility review.

curl -X POST https://api.testers.ai/v1/chat/completions \

-H "Authorization: Bearer YOUR_TAI_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "tai-1",

"messages": [

{"role": "system", "content": "You are a QA expert."},

{"role": "user", "content": "Review this login form for bugs."}

]

}'

from openai import OpenAI

client = OpenAI(

base_url="https://api.testers.ai/v1",

api_key="YOUR_TAI_KEY"

)

response = client.chat.completions.create(

model="tai-1",

messages=[

{"role": "user", "content": "Find accessibility bugs in this HTML."}

]

)

print(response.choices[0].message.content)

import OpenAI from "openai";

const client = new OpenAI({

baseURL: "https://api.testers.ai/v1",

apiKey: "YOUR_TAI_KEY",

});

const res = await client.chat.completions.create({

model: "tai-1",

messages: [{ role: "user", content: "Generate test cases for checkout." }],

});

console.log(res.choices[0].message.content);

All models are quality-tuned — optimised for software testing tasks vs. general-purpose LLMs.

# List available models

curl https://api.testers.ai/v1/models \

-H "Authorization: Bearer YOUR_TAI_KEY"

Authorization: Bearer YOUR_TAI_KEY

Same key as the Test Reports API (jk_… format). Sign in → profile → Generate API key.

# Before

base_url = "https://api.openai.com/v1"

api_key = "sk-…"

# After — same SDK, same code

base_url = "https://api.testers.ai/v1"

api_key = "YOUR_TAI_KEY"

All standard OpenAI SDK methods work: chat.completions, streaming, function calling, embeddings.