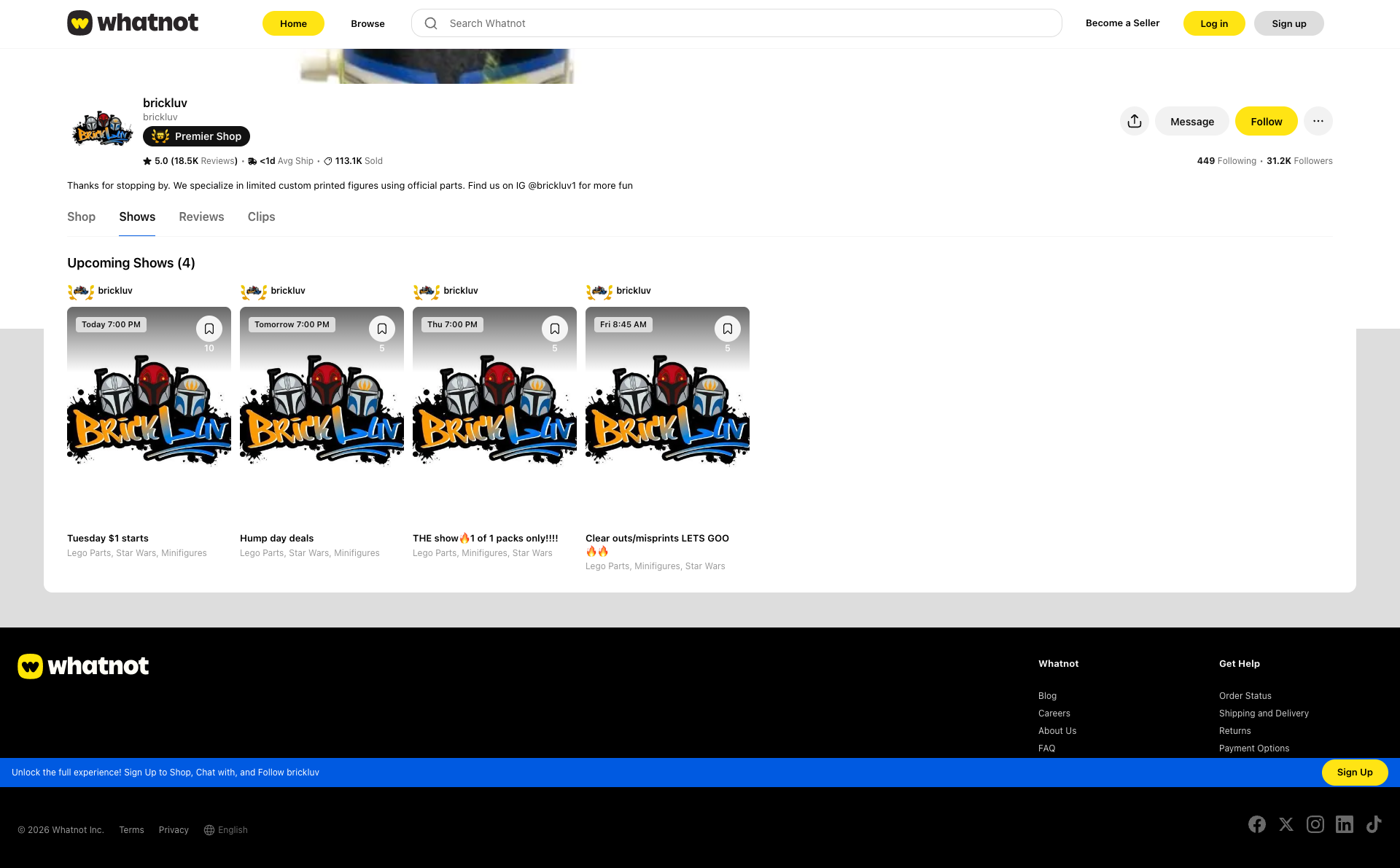

Whatnot scored B- (80%) with 324 issues across 7 tested pages, ranking #8 of 8 Testlio portfolio apps. That's 125 more than the 199.2 category average (0th percentile).

Top issues to fix immediately: "Hero QR code for app download is too small to scan comfortably" — Increase the QR code size to 180-240px on desktop, maintain aspect ratio, and add a clear text label 'Download Whatno...; "HTTP 429 Too Many Requests on resource load" — Identify the resource(s) returning 429 and implement retry with exponential backoff, introduce client-side request th...; "Network DNS resolution failure (ERR_NAME_NOT_RESOLVED) for multiple re" — Validate that all resource URLs point to resolvable domains.

Weakest area — usability (6/10): Limited navigation visible beyond login; primary actions are unclear; QR code and 'How it works' CTA exist but without clear pa...

Quick wins: Add a persistent navigation bar with product categories, search, and filters for quick access. Improve accessibility: provide alt text for all images, semantic headings, and keyboard navigability; ensure color....

Jason · GenAI Code Analyzer

Jason · GenAI Code Analyzer⚠️ AI/LLM ENDPOINT DETECTED Jason · GenAI Code Analyzer

Jason · GenAI Code AnalyzerGET http://www.whatnot.com/privacy - Status: N/A; GET http://www.whatnot.com/privacy - Status: 307; INSECURE: HTTP (non-HTTPS) requestGET http://www.whatnot.com/privacy Jason · GenAI Code Analyzer

Jason · GenAI Code Analyzer"⚠️ AI/LLM ENDPOINT DETECTED"POST https://pactsafe.io/send - Status: N/A; GET https://pactsafe.io/send - Status: 200