B-80%

Quality Score

6

Pages

77

Issues

7.7

Avg Confidence

7.6

Avg Priority

27 Critical31 High19 Medium

>_ Testers.AI

AI Analysis

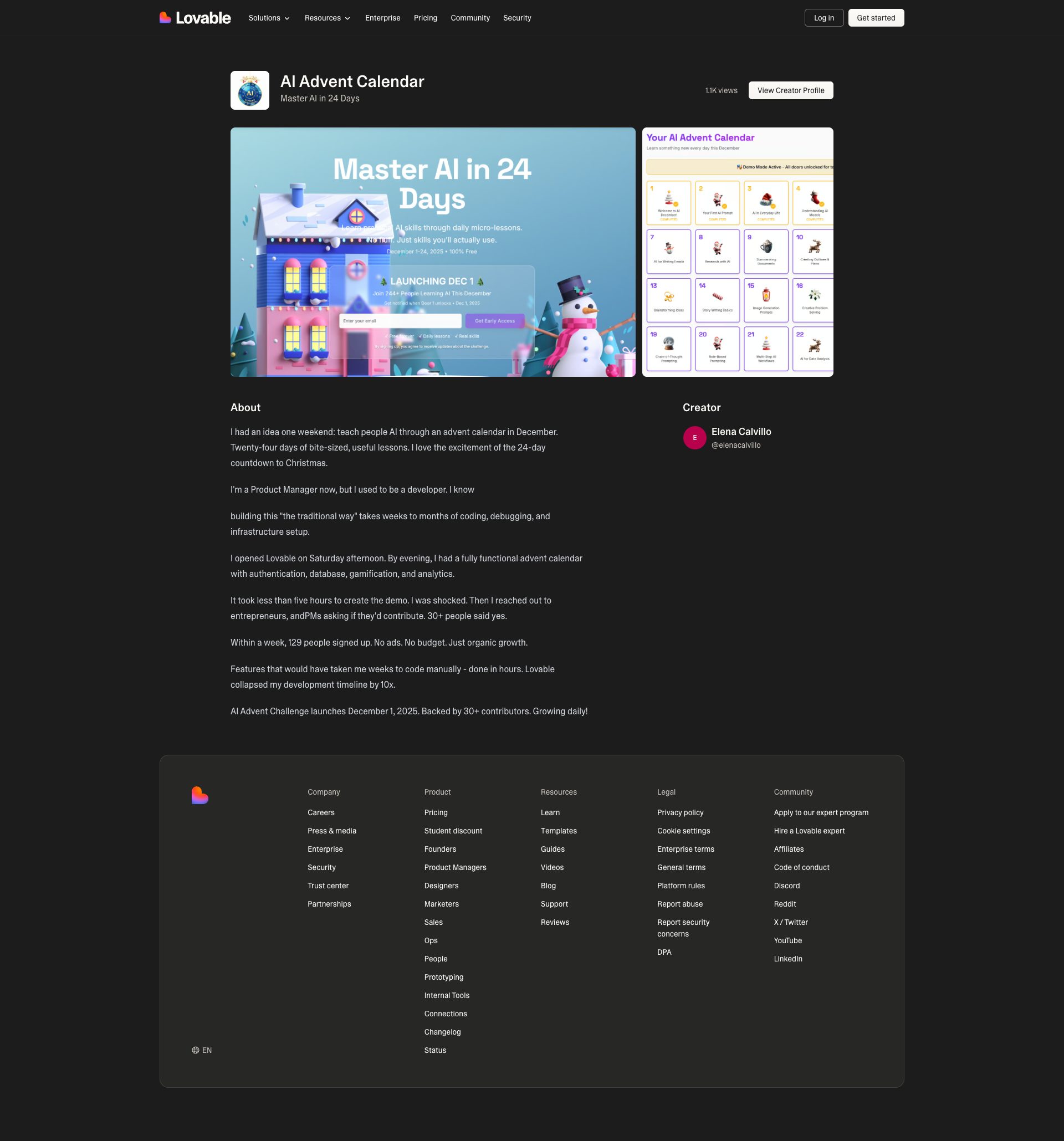

Holiday Ai Advent was tested and 77 issues were detected across the site. The most critical finding was: Outbound Sentry telemetry may expose PII to third-party. Issues span Security, Performance, A11y, Other categories. Persona feedback rated Visual highest (7/10) and Accessibility lowest (6/10).

Qualitative Quality

Holiday Ai Advent

Category Avg

Best in Category

Pages Tested · 6 screenshots

Homepage

lovable.dev.products.holiday

lovable.dev.products.holiday

lovable.dev.products.holiday

lovable.dev.products.holiday

lovable.dev.products.holiday

Detected Issues · 77 total

1

Outbound Sentry telemetry may expose PII to third-party

CRIT P9

Prompt to Fix

In your Next.js frontend, update the Sentry integration to redact all PII before sending telemetry. Implement a beforeSend hook (or equivalent) to scrub user.email, user.name, user.id, IP addresses, and any URL query parameters or form data. Ensure telemetry is opt-in with a clear consent mechanism; provide an opt-out option for non-essential data collection. Validate by capturing network traffic to the Sentry endpoint and confirming no PII is transmitted. Add a privacy-compliance note in the app's privacy policy and update the Sentry config accordingly.

Why it's a bug

The network activity shows a POST to a third-party Sentry endpoint (ingest.us.sentry.io) for error/telemetry data. Without clear disclosure and explicit consent, this can transmit potentially identifiable information (PII) or user behavior details to a third party, creating privacy risk and regulatory exposure.

Why it might not be a bug

Sentry is a common tool for error tracking; if data is properly scrubbed and consent is provided, this may be acceptable. The log does not reveal payload contents, so the risk hinges on configuration (beforeSend scrub, redaction, and user consent).

Suggested Fix

1) Enable and enforce PII redaction in Sentry (e.g., beforeSend to scrub emails, user IDs, IPs, query params, and form data). 2) Disable or limit automatic collection of user-identifying fields unless explicit user consent is granted. 3) Ensure only non-identifying telemetry is sent; remove or mask personally identifiable fields from event data. 4) Add a user privacy consent option for telemetry in the UI and respect opt-out. 5) Review server and client logging to avoid capturing PII. 6) Document data handling in privacy policy and Sentry integration notes.

Why Fix

Reducing exposure of PII to third parties protects users, strengthens regulatory compliance (e.g., GDPR/CCPA), and preserves user trust. It also minimizes risk if the payloads contain sensitive data unintentionally.

Route To

Privacy Engineer

Page

Tester

Pete · Privacy Networking Analyzer

Technical Evidence

Network:

POST https://o4506071217143808.ingest.us.sentry.io/api/4506071220944896/envelope/?sentry_version=7&sentry_key=58ff8fddcbe1303f19bc19fbfed46f0f&sentry_client=sentry.javascript.nextjs%2F10.28.0 - Status: N/A2

Sentry ingestion key exposed in client-side URL (sentry_key in query string)

CRIT P9

Prompt to Fix

In your Sentry integration, remove any manual construction of envelope URLs that include a query parameter named sentry_key. Replace with a standard Sentry DSN-based configuration and initialize the SDK with process.env.SENTRY_DSN (or a securely injected runtime variable). Do not log or expose the DSN/key in URLs or request query strings. Remove any code paths thatPOST directly to the envelope endpoint with query parameters. Example: import * as Sentry from '@sentry/nextjs'; Sentry.init({ dsn: process.env.SENTRY_DSN, tracesSampleRate: 0.2 }); Ensure server/client environments never expose SENTRY_KEY in logs, headers, or URL query strings. Validate that network requests to Sentry use the SDK-provided endpoints without manual key in the URL and enforce origin restrictions in Sentry project settings.

Why it's a bug

The network call to the Sentry ingestion endpoint includes a query parameter sentry_key=58ff8fddcbe1303f19bc19fbfed46f0f. Exposing authentication credentials in URL query parameters is a security risk because URLs can be logged by servers, proxies, and browser history, potentially leaking keys used for error ingestion. Even if the key is public, it should not be exposed in logs or referer headers. This increases the attack surface and could allow misuse of the Sentry project if logs are retained or leaked.

Why it might not be a bug

Sentry client keys are designed for public usage in client-side error reporting and may be exposed in client code. However, best practices discourage placing any keys in URLs, and exposing even public keys in logs can be problematic if logs are not properly protected. Reducing exposure is still beneficial for security hygiene.

Suggested Fix

Do not send the Sentry key (or DSN query parameters) in the URL. Configure Sentry using a DSN string provided via secure environment configuration and the Sentry SDK (e.g., @sentry/nextjs or @sentry/browser) without manually constructing envelope URLs with query parameters. Ensure that requests to the Sentry ingest endpoint are performed by the SDK and not via custom fetch calls that leak keys in the URL. If possible, restrict origins and rotate keys if exposure occurred.

Why Fix

Prevent potential leakage of Sentry credentials through logs or referer headers, reduce risk of unauthorized events ingestion, and follow security best practices for third-party telemetry integration.

Route To

Security Engineer

Page

Tester

Sharon · Security Networking Analyzer

Technical Evidence

Console:

POST to https://o4506071217143808.ingest.us.sentry.io/api/4506071220944896/envelope/?sentry_version=7&sentry_key=58ff8fddcbe1303f19bc19fbfed46f0f&sentry_client=sentry.javascript.nextjs/10.28.0Network:

POST https://o4506071217143808.ingest.us.sentry.io/api/4506071220944896/envelope/?sentry_version=7&sentry_key=58ff8fddcbe1303f19bc19fbfed46f0f&sentry_client=sentry.javascript.nextjs/10.28.03

AI endpoints invoked on page load (early GenAI calls)

CRIT P9

Prompt to Fix

Identify all AI/LLM endpoint calls that occur on initial page load in the frontend bundle. Refactor to defer such calls until user action or explicit consent. Add a consent prompt and feature flagging. Provide a concrete patch showing where to move fetch/axios calls and how to gate the AI initialization logic behind a user-initiated event.

Why it's a bug

The console shows repeated detection of AI endpoints and on-load AI requests, indicating GenAI endpoints are being called before user interaction. This can degrade performance, leak data, and erode user trust if consent isn’t explicitly obtained.

Why it might not be a bug

If the app deliberately preloads AI data to improve perceived performance, it might be justified. However, the evidence in the screenshot suggests implicit AI calls without clear user consent or visible UI indication.

Suggested Fix

Move AI endpoint calls behind explicit user action or consent. Lazy-load or dynamically import AI features only after interaction; implement a consent banner; gate AI calls behind feature flags; add caching to reduce repeat requests.

Why Fix

Reducing unnecessary network traffic, protecting user privacy, and improving perceived performance and reliability for non-AI users.

Route To

Frontend Engineer / Performance Engineer / Privacy Engineer

Page

Tester

Jason · GenAI Code Analyzer

Technical Evidence

Console:

⚠️ AI/LLM ENDPOINT DETECTEDNetwork:

GET https://lovable.dev/products/holiday-ai-advent - Status: 200